These images are from the first implementation of my backend, where I had accidentally called normalize() on a vector which was almost normalized. The result is pixel-imperfection when compared to the standard ARB2 backend, and the cost of pointless normalization in the fragment shader.

You can also see the importance of running a comparison or image-diff program when implementing a new backend. Can you see the differences between the first two images immediately, with the naked eye? I couldn't.

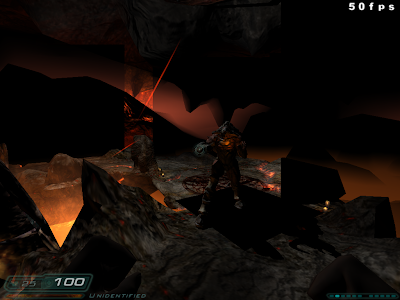

Finally, here is the backend running the hellhole level. The black regions are areas that would be rendered by the (currently unimplemented in GLSL) heatHaze shader. Not bad for an i965 GPU.

Just for the laughs, here is what happens when Doom 3 decides to try LSD; or fails to pass initialized texture coordinates from the vertex program to the fragment program in the ARB2 backend.

Is it bad that I want to play in something that looks like the image diff? That looks awesome; reminds me of NPR Quake.

ReplyDeleteI don't know whether it's bad. It might cause a migraine after a few hours. ;-)

DeleteHowever it would not be difficult to implement such a rendering mode. Basically a few lines of GLSL and some very minor (1-2 FPS or less) performance degradation.

Support for OpenGL ES2.0 is also under development, along with a few other development branches. Nothing release worthy yet, though.

ReplyDeleteI wonder if I can run doom3 on an arm Linux pc? It would be great to run on a pc that uses less than 5 watts unlike any x86 CPU available right now.

ReplyDeleteYes, it's trivial to compile for the ARM architecture. However performance will suffer especially on low-power hardware and depending on the GPU available; SGX, for example, seems to have a particularly difficult time with multi-pass renderers.

Deletex86 also has the advantage of having SIMD code already available, although you could implement a NEON backend relatively easily, if you're familiar with ARM NEON assembly programming.

In any case, you're going to be GPU bound rather than CPU bound, and a SIMD backend isn't going to solve that problem.